Migration Utility

Development Overview: The Basics

The table below describes files and folders used by the Migration Utility along with a description and purpose for each resource.

| Overview Item | Needed by Whom? | Brief Description & Purpose |

|---|---|---|

Script Directory: |

|

|

Directory: |

|

|

Library/Console: (console application created via dotnet publish) |

|

|

Test Project: |

|

|

Development Troubleshooting

This section outlines general troubleshooting procedures.

Compatibility Errors

- Before the schema is updated, the ODS data is checked for compatibility. If changes are required, the upgrade will stop and exception will be thrown with instructions on how to proceed.

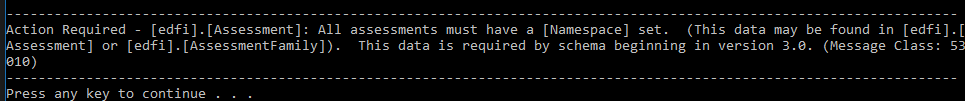

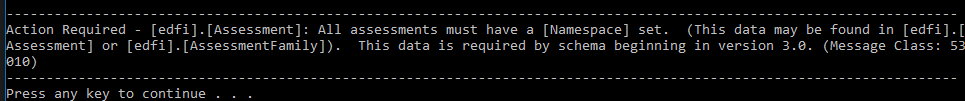

An example error message follows:

- After making the required changes (or writing custom scripts), simply launch the upgrade utility again. The upgrade will proceed where it left off and retry with the last script that failed.

Other Exceptions During Development

- Similar to compatibility error events, the upgrade will halt and an exception message will be generated during development if a problem is encountered.

- After making updates to the script that failed, simply re-launch the update tool. The upgrade will proceed where it left off starting with the last script that failed.

- If you are testing a version that is not yet released, or if you need to re-execute scripts that were renamed or modified during active development: restore your database from backup and start over.

- Similar to other database migration tools, a log of scripts successfully executed will be stored in the default journal table. DbUp's default location is

[dbo].[SchemaVersions]. - A log file containing errors/warnings from the most recent run may be found by default in

"{YourInstallFolder}\.store\edfi.suite3.ods.utilities.migration\{YourMigrationUtilityVersion}\edfi.suite3.ods.utilities.migration\{YourMigrationUtilityVersion}\tools\netcoreapp3.1\any\Ed-Fi-Migration.log".

Additional Troubleshooting

- The step-by-step usage guide below contains runtime troubleshooting information.

Design/Convention Overview

The table below outlines some important conventions in the as-shipped Migration Utility code.

| What | Why | Optional Notes |

|---|---|---|

In-place upgrade Database upgrades are performed in place rather than creating a new database copy | Extensions

As a secondary concern, this upgrade method was chosen to ease the upgrade process for a Cloud-based ODS (e.g., on Azure). |

|

Sequence of events that occur during upgrade Specifics differ for each version, but in general the upgrade sequence executes as follows

| Minimize the number of scripts with complex dependencies on other scripts in the same directory/upgrade step.

| The suite 2 to suite 3 upgrade is a good example case to demonstrate the upgrade steps working together due its larger scale:

|

One script per table in each directory, where possible Scripts are named in the format: #### TableName [optional_tags].sql This convention does not apply to operations that are performed dynamically | Troubleshooting, Timeout prevention Custom, unknown extensions on the ODS are common. As part of the process of upgrading a highly-customized ODS, an installer is likely to run into a sql exception somewhere in the middle of upgrade (usually caused by a foreign key dependency, schema bound view, etc). In general, we do not want to attempt to modify an unknown/custom extension on an installer's behalf to try and prevent this from happening. It is important that a installer be aware of each and every change applied to their custom tables. Migration of custom extensions will be handled by the installer. Considering the above, in the event an exception does occur during upgrade, we want to make the troubleshooting process as easy as possible. If an exception is thrown, an installer should immediately be able to tell:

Many issues may be fixable from the above information alone. If more detail is needed, the installer can view the code in the referenced script file. By separating script changes by table, we make an effort to ensure that there are only a few lines to look though (rather than hundreds) In addition, each script will be executed in a separate transaction. Operations such as index creation can take a long time on some tables with a large ODS. Splitting the code into separate transactions helps prevent unexpected timeout events | The major downside of this approach is the large number of files it can produce. For example, the suite 2 to suite 3 upgrade was a case where all existing tables saw modifications. This convention generates a change script for every table in more than one directory. With updates becoming more frequent in the future, future versions should not be impacted as heavily. |

Most change logic is held in sql scripts (as of V3) As of | As of Given this advantage, effort was made to ensure that each part of the migration tool (console utility, library, integration tests) could be replaced individually as needed The current upgrade utility contains a library making use of DbUp to drive the upgrade process. In the future, if/when this tool no longer suits our needs, we should be able to take existing scripting and port it over to an alternative upgrade tool (such as RoundhousE), or even a custom built tool if the need ever arises. This convention could (and should) change in the future if upgrade requirements become too complex to execute from SQL scripting alone. | |

Two types of data validation/testing options

| Prevent data loss The first type of validation, (dynamic, sql based) is executed on on data that we know should not ever change during the upgrade.

The second type of data validation, integration test based, is used to test the logic and transformations where we know the data should change:

Together, the two validation types (validation of data that changes, and validation of data that does not change) can be used to create test coverage wherever it is needed for a given ODS upgrade. | The the dynamic validation is performed via reusable stored procedures that are already created and available during upgrade. See scripts in the "* |

Compatibility Conditions

This section describes compatibility conditions (i.e., requirements that may need intervention for the compatibility tool to function properly) and suggested remediation.

Other Compatibility Conditions

There are several other less common items not included above. The migration utility will check for these items automatically and provide guidance messages as needed. For additional technical details, please consult the 75106740 below.

Ed-Fi ODS Migration Tool Parameter Reference

| Parameter | Description | Example | Required? |

|---|---|---|---|

--Database | Database Connection String | --Database "Data Source=YOUR\SQLSERVER;Initial Catalog=Your_EdFi_Ods_Database;Integrated Security=True" | Yes |

--Engine | Database Engine Type (SQLServer or PostgreSQL) | --Engine PostgreSQL | No (Defaults to SQLServer) |

--DescriptorNamespace | Descriptor Namespace prefix to be used for new and upgraded descriptors. Namespace must be provided in suite 3 format as follows: Valid characters for an education organization name: alphanumeric and Script Usage: Provided string value will be escaped and substituted directly in applicable sql where the | --DescriptorNamespace "uri://ed-fi.org" | Yes |

--CredentialNamespace | Namespace prefix to be used for all new Credential records. Namespace must be provided in suite 3 format as follows: Valid characters for an education organization name: alphanumeric and Script Usage: Provided string value will be escaped and substituted directly in applicable SQL where the | --CredentialNamespace "uri://ed-fi.org" | Yes, if table edfi.StaffCredential has data Optional if table edfi.StaffCredential is empty |

--CalendarConfigFilePath | Path to calendar configuration, which must be accessible from your sql server. Script Usage: Provided string value will be substituted directly in dynamic SQL where the | --CalendarConfigFilePath "C:\PATH\TO\YOUR\CALENDAR_CONFIG.csv" | Single-Year ODS: No (unless prompted by the upgrade tool) Multi-Year ODS: Yes |

--DescriptorXMLDirectoryPath | Path to directory containing 3.1 descriptors for import, which must be accessible from your sql server Script Usage: Provided string value will be substituted directly in dynamic SQL where the | --DescriptorXMLDirectoryPath "C:\PATH\TO\YOUR\DESCRIPTOR\XML" | Local Upgrade: No (applicable to most cases) Remote Upgrade: Yes Used if the Descriptor XML directory has been moved to a different location accessible to your sql server |

--BypassExtensionValidationCheck | Permits the migration tool to make changes if extensions or external schema dependencies have been found | --BypassExtensionValidationCheck | Extended ODS: Yes This includes any dataset with an extension schema or foreign keys pointing to the Ed-Fi schema. Others: No |

--CompatibilityCheckOnly | Perform a dry run for testing only to check ODS compatibility without making additional changes. The database will not be upgraded. | --CompatibilityCheckOnly | No. This is an optional feature. |

--Timeout | SQL command query timeout, in seconds. | --Timeout 1200 | No. (Useful mainly for development and testing purposes) |

--ScriptPath | Path to the location of the SQL scripts to apply for upgrade, if they have been moved | --ScriptPath "C:\PATH\TO\YOUR\MIGRATION_SCRIPTS" | No. (Only needed if scripts have been moved from the default location. Useful mainly for development and testing purposes) |

| --FromVersion | The ODS/API version you are starting from. | --FromVersion "3.1.1" | No. Migration utility will detect your staring point. |

| --ToVersion | The ODS/API version that you would like upgrade to. | --ToVersion "5.3" | No. Migration utility will take you to the latest version by default. |

Usage Troubleshooting Guide

The below section provides additional guidance for many common compatibility issues that can be encountered during the upgrade process.

| Error received during upgrade | Explanation | How to fix it |

|---|---|---|

Action Required: Unable to proceed with migration because the BypassExtensionValidationCheck option is disabled ... | An external dependency on the edfi schema has been found. As a courtesy, the migration tool will not proceed with the upgrade process without your permission. Common Examples:

Why: This notification is intended to bring extension items to your attention that will require manual handling. All primary keys and indexes on the | After reviewing the data and dependencies on your extension tables, add the Please review Step 8. Write Custom Migration Scripts for Extensions (above) before proceeding. |

| Action Required: edfi.StaffCredential ... | The column StateOfIssueStateAbbreviationTypeId must be non-null for all records. This value will become part of a new primary key on the 3.1 schema. | Add a [StateOfIssueStateAbbreviationTypeId] for all records in [edfi].[StaffCredential]. This is the abbreviation for the name of the state (within the United States) or extra-state jurisdiction in which a license or credential was issued.

|

Action Required - An EducationOrganizationId must be resolvable for every student in the following table(s) for compatibility with the upgraded schema starting in version 3.0: (Provided list of tables includes one or more of the following):

| The upgrade utility must be able to locate an [EducationOrganizationId] for every student with data in the listed tables to proceed. Beginning in v3.1, the schema structure now requires that these student information items be defined separately for each associated EducationOrganization rather than simply linking them to a student. | The easiest way to meet this requirement is to ensure that every student has a corresponding record in [edfi].[StudentSchoolAssociation] or [edfi].[StudentEducationOrganizationAssociation]. The upgrade tool will use this information to handle the rest of the data conversion tasks for you. |

| Action Required: edfi.Assessment ... | All assessments must have a [Namespace] set. (This data may be found in [edfi].[Assessment] or [edfi].[AssessmentFamily]). In suite 3, the schema required that this column be non-null. | Add a [Namespace] for all assessment records.

|

| Action Required: edfi.OpenStaffPosition ... | There may be no two duplicate This is uniqueness if required for the upgraded primary key on this table. | Update the RequisitionNumber values on edfi.OpenStaffPosition. Ensure that the same value is not used twice for the the same education organization.

|

| Action Required: edfi.RestraintEvent ... | There may be no two duplicate This is uniqueness if required for the upgraded primary key on this table. | Update the

|

| Action Required: edfi.GradingPeriod ... | There may be no two duplicate PeriodSequence values for the same school during the same grading period.Additionally, if prompted by the upgrade tool, all This compatibility requirement is a result of a primary key change between suite 2 and suite 3

| Ensure that there are no two records with the same SchoolId, GradingPeriodDescriptorId, PeriodSequence, and SchoolYear.

(Requirements may be less strict than noted in the above query for some some multi-year Calendars. See the compatibility check script in the 01 Bootstrap directory for exact technical requirements.) |

| Action Required: edfi.DisciplineActionDisciplineIncident ... | Every record in [edfi].[DisciplineActionDisciplineIncident] must have a corresponding record in [edfi].[StudentDisciplineIncidentAssociation] with the same [StudentUSI], [SchoolId], and [IncidentIdentifier]. The V3 schema no longer allowed discipline action records with students that are not associated with the discipline incident. A foreign key will be added to the new schema enforcing this. |

|

Calendar configuration file error - various similar messages may appear that mention a table name and a list of school ids. Example error:Found {#} date ranges in [edfi].[ | The calendar configuration file contains the start date and end date for each school year. To support the new calendar features in V3, the migration tool uses this configuration file to assign a SchoolYear to all CalendarDate related entries in the database. There are several variations of this type of error which all have a similar meaning. The migration tool found date records in the specified table that could not be assigned a school year based on the BeginDate and EndDate information provided. | Either the calendar configuration file will need to be edited, or data in the specified table will need to be modified.

Example: Example Query: [edfi].[CalendarDate] SELECT *

FROM [edfi].[CalendarDate]

WHERE [SchoolId] = {School_Id_from_error_message}

AND

(

[Date] < {BeginDate_from_calendar_configuration_file}

OR [Date] > {EndDate_from_calendar_configuration_file}

)

|

SqlException: or similar: The object '{OBJECT_NAME_HERE}' is dependent on column '{COLUMN_NAME_HERE}'. | This type of unhandled SQL exception occurs when the migration process tries to alter an item that is being referenced by an external object, such as a foreign key on another table, or schema-bound view. Common causes

| Make sure that you have dropped ALL foreign keys and views from other schemas that have a dependency on the You do not need to drop any constraints on the edfi schema itself: This is handled automatically for you. For tips on locating foreign key dependencies quickly, see the query in Step 8b. above. |

| A data validation failure was encountered on destination object {table name here} ... | Data was modified in a location that was not expected to change. A validation check from script directory Scripts/02 Setup/{version}/## Source Validation Check has failed. | This state is triggered if certain records are modified in the middle of migration that the upgrade utility expected would remain unchanged. The upgrade will be halted for you as a precaution to prevent unintended data loss. Common Causes:

If you are testing potential updates to the suite 2 ODS data by hand (data only / no custom scripts):

If you are writing your own custom scripts:

If your suite 2 Ed-Fi ODS schema is unmodified, and you are not making edits to the current scripting or data, this error should not occur during normal operation.

|